When it comes to a PC's performance, the spotlight almost always goes to the processor and graphics card. Yet, behind the scenes, there's a component that decides what is possible and what is not, how many ports you can use, how much bandwidth peripherals will have, how many drives and how many PCIe lanes will truly be available. It's the chipset, the silent conductor of the motherboard.

Understanding what a chipset does, how it communicates with the CPU, RAM, and peripherals, and how it influences performance means reading hardware in a more mature way. When designing a work PC, a gaming machine, or a server for professional hosting, like those underlying the Meteora Web Hosting platforms, the choice of chipset is one of the most strategic decisions.

What is the chipset and what role does it play on the motherboard

In simple terms, the chipset is a set of control logic integrated onto the motherboard that manages communication between the CPU and the rest of the system. It is the routing point for many fundamental functions, from USB ports to PCI Express lanes, from SATA to networking, and even some power management functions.

In the past, people spoke of northbridge and southbridge to indicate two separate chips. Today, many classic northbridge functions, like the memory controller, are integrated directly into the CPU, while much of the rest is handled by a single chip, namely the chipset. It is no longer the absolute master of the buses like in the days of the old PC, but it remains the hub to which most peripherals connect.

Each processor family has its own range of dedicated chipsets. In the desktop world, there are different designations for platforms intended for mainstream use, for overclocking, and for the professional sphere. They differ in the number of PCIe lanes offered, the quantity of latest-generation USB ports, support for RAID or not, integrated Wi-Fi, and advanced management and security functions.

How it works between the CPU, PCIe lanes, and peripherals

To understand how a chipset works, you must imagine the system as a network of roads. The CPU has a direct high-speed link to the chipset via a dedicated connection. From there, connections branch out to secondary PCIe slots, USB ports, SATA controllers, and any additional interfaces like Ethernet, audio, or Wi-Fi.

The PCI Express lanes available in a system, for example, are the sum of those provided directly by the CPU and those made available by the chipset. The CPU's lanes are typically used for the primary graphics card and, increasingly, for high-performance NVMe SSDs. The chipset's lanes serve additional expansion slots, extra network controllers, and other storage units.

Whenever a device connected to the chipset communicates with the CPU, the traffic passes through this link. If too many bandwidth-hungry devices are connected to the same resources, the risk is creating bottlenecks. Not because the individual component is slow, but because the road to the CPU starts to get crowded. This is one of the reasons why higher-end chipsets offer more lanes, more ports, and better management of shared resources.

The same applies to USB and SATA ports. The chipset decides how many you can have, of which generation, and with which maximum speeds. A machine with few USB 3 ports and only a few free SATA ports will struggle to grow, no matter how powerful the CPU is.

How the chipset truly influences performance

It's often thought that the chipset doesn't affect performance as long as the processor is fast. In reality, its impact is felt in several scenarios. The first is scalability. If you want to use multiple NVMe SSDs, capture cards, 10 Gb network cards, RAID controllers, you need a chipset that offers enough lanes and enough aggregate bandwidth to not bog down the entire system.

A second aspect concerns advanced features. Higher-end chipsets often support more aggressive overclocking, more aggressive memory profiles, more M.2 slots directly connected without sharing too much bandwidth with other ports. This translates to shorter loading times, faster data transfers, and less risk of seeing performance plummet when everything is under stress.

Then there's the matter of modern connectivity. Native support for the latest generation USB, Thunderbolt controllers, 2.5 GbE or higher networks isn't just a convenience. In a professional environment, it means reducing bottlenecks in workflows. For those working with heavy files, continuous backups, or content streaming, a richer chipset makes as much difference as a good CPU.

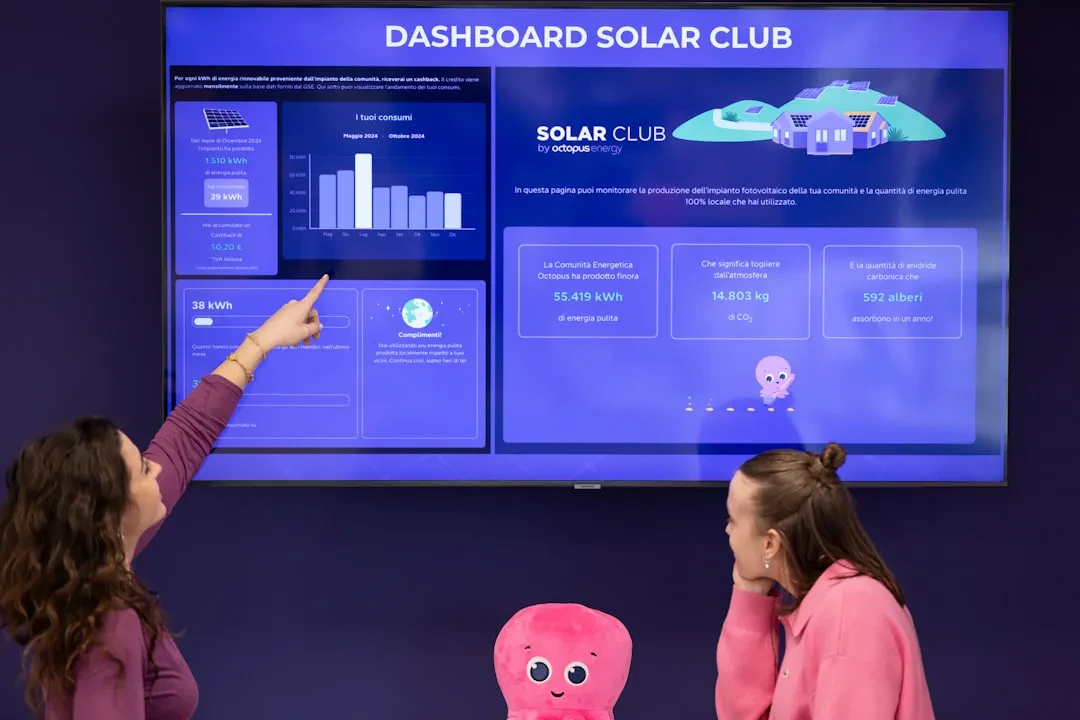

In the server and hosting world, these effects are even more evident. Motherboards designed for data centers use specific chipsets that offer more PCIe lanes for network and storage cards, support for ECC memory, and remote management functions. It is precisely these elements that allow providers like Meteora Web Hosting to build scalable infrastructures, with many NVMe drives, multiple network interfaces, and integrated monitoring systems.

Finally, there's the issue of platform longevity. A more complete chipset allows for upgrading storage, expansion cards, and networking over time without having to immediately replace the entire motherboard. In a personal PC, it means being able to add a second fast SSD a year later. In a server environment, it means being able to expand capacity and functions without heavy downtime.

In summary, the chipset is the connective tissue that makes it possible to truly leverage what the CPU, RAM, and peripherals promise. It's not the component to choose based solely on the lowest price, but one of the elements that decides how balanced the machine will be today and how ready it will be to grow tomorrow.

> AUTHOR_EXTRACTED

Ing. Calogero Bono

Co-founder di Meteora Web. Ingegnere informatico, sviluppo ecosistemi digitali ad alte prestazioni. AI, automazione, SEO tecnica e infrastrutture web. Scrivo di tecnologia per rendere complesso… semplice.

[ Read Full Dossier ]

Hai bisogno di applicare questa strategia?

Esegui il protocollo di contatto per iniziare un progetto con noi.

> INIZIA_PROGETTO